The rapid proliferation of advanced AI systems in the public domain has resulted in widespread usage by individuals and businesses. These systems are exceptionally adaptable to various tasks, including content generation and code creation through natural language prompts. However, this accessibility has opened the door for threat actors to use AI for sophisticated attacks. Adversaries can leverage AI to automate attacks, speed up routines, and execute more complex operations to achieve their goals.

AI as a Powerful Tool

We have observed several ways cybercriminals are using AI:

1. ChatGPT can be used for writing malicious software and automating attacks against multiple users.

2. AI programs can log users’ smartphone inputs by analysing acceleration sensor data, potentially capturing messages, passwords, and bank codes.

3. Swarm intelligence can operate autonomous botnets that communicate with each other to restore malicious networks after damage.

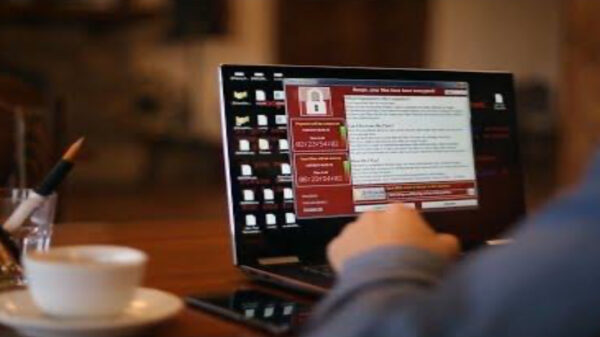

Kaspersky recently conducted another comprehensive research on using AI for password cracking. Most passwords are stored encrypted with cryptographic hash functions like MD5 and SHA. While it’s simple to convert a text password to an encrypted line, reversing the process is challenging. Unfortunately, password database leaks occur regularly, affecting both small companies and tech leaders.

In July 2024, the largest leaked password compilation to date was published online, containing about 10 billion lines with 8.2 billion unique passwords.

Alexey Antonov, Lead Data Scientist at Kaspersky, stated, “We analysed this massive data leak and found that 32% of user passwords are not strong enough and can be reverted from encrypted hash form using a simple brute-force algorithm and a modern GPU 4090 in less than 60 minutes.” He added, “We also trained a language model on the password database and tried to check passwords with the obtained AI method. We found that 78% of passwords could be cracked this way, which is about three times faster than using a brute-force algorithm. Only 7% of those passwords are strong enough to resist a long-term attack.”

Social Engineering with AI

AI can also be used for social engineering to generate plausible-looking content, including text, images, audio, and video. Threat actors can use large language models like ChatGPT-4o for generating scam text, such as sophisticated phishing messages. AI-generated phishing can overcome language barriers and create personalised emails based on users’ social media information. It can even mimic specific individuals’ writing styles, making phishing attacks potentially harder to detect.

Deepfakes present another cybersecurity challenge. What was once just scientific research has now become a widespread issue. Criminals have tricked many people with celebrity impersonation scams, leading to significant financial losses. Deepfakes are also used to steal user accounts and send audio money requests using the account owner’s voice to friends and relatives.

Sophisticated romance scams involve criminals creating fake personas and communicating with victims on dating sites. One of the most elaborate attacks occurred in February in Hong Kong, where scammers simulated a video conference call using deepfakes to impersonate company executives, convincing a finance worker to transfer approximately $25 million.

AI Vulnerabilities

Besides using AI for harmful purposes, adversaries can also attack AI algorithms themselves. These attacks include:

1. Prompt injection attacks on large language models, where attackers create requests that bypass previous prompt restrictions.

2. Adversarial attacks on machine learning algorithms, where hidden information in images or audio can confuse AI and force incorrect decisions.

As AI becomes more integrated into our lives through products like Apple Intelligence, Google Gemini, and Microsoft Copilot, addressing AI vulnerabilities becomes crucial.

Kaspersky’s Use of AI

Kaspersky has been using AI technologies to protect customers for many years. We employ various AI models to detect threats and continuously research AI vulnerabilities to make our technologies more resistant. We also actively study different harmful techniques to provide reliable protection against offensive AI.